Designing a Scalable AI Assistants Framework For GenAI

How can designers quickly create AI-powered assistants without missing key features or reinventing the wheel?

Role:

Service, UX Designer & Researcher

Tools:

Figma, Miro

Project Overview

Our agency needed a consistent, scalable way to design AI assistants.

Mission

Build a GenAI framework roadmap, templates, and paradigms that accelerates design, aligns stakeholders, and ensures robust, user-centered platforms.

Symptom

- Fragmented design approaches

- Clients unclear on AI assistant value

- PMOs struggling with scoping

Root Cause

No structured methodology for AI assistant design.

Impacts

- Slower delivery

- Missed features

- Low trust in scalability

Design Challenge

Create a framework that guides designers step by step—from scoping and personas to features, paradigms, and principles while giving clients and PMOs clarity.

1) Lack of Structure

2) Missing Features

3) INCONSISTENT PRINCIPLES

4) Client & PMO Alignment

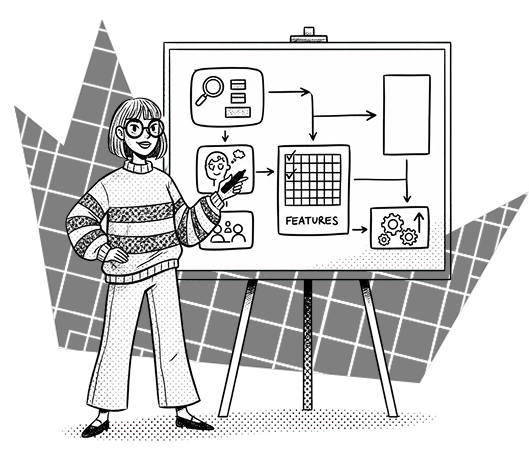

Process & Approach

Provided a roadmap

Added clear roadmap

problem → persona → AI values → features.

Outcome

Repeatable, guided workflow.

Created Templates

Created feature templates:

core, optional, advanced.

Outcome

Covered gaps, future-ready design.

Defined Principles

Defined design principles & UI paradigms.

Outcome

Educated designers and clients for AI design

Designed the skeleton

Built high-fidelity skeleton types with their interactions

Outcome

Faster approval, smoother execution and avoid repartition

Design Solutions

1

designed

Artifacts

Roadmap, personas, feature lists

2

Features

Library

Memory, prompts, handoff, explainability

3

Designed

Paradigms

Chat, inline, proactive

4

Defined

Principles

Transparency, trust, scalability, tone

Design Paradigms

Results & Impact

Business

Faster AI projects, clearer scope

Customers

Clarity, stronger trust in solutions

Operations

Unified workflow, better PMO scoping

Reflection

Standardizing the process freed designers to focus on creativity while reducing risk.

Next step: add metrics to measure assistant performance post-launch.